As Edge AI continues to gain traction, the debate over the best processing units for these applications intensifies. Among the top contenders are Neural Processing Units (NPUs) and Graphics Processing Units (GPUs). Both have their strengths and weaknesses, and understanding these can help in making informed decisions for deploying AI solutions at the edge.

Introduction to Edge AI

Edge AI refers to the deployment of artificial intelligence algorithms on devices at the edge of the network, closer to the data source. This approach reduces latency, enhances privacy, and can operate with limited or no internet connectivity. Common applications include smart cameras, autonomous vehicles, and industrial IoT devices.

GPUs: The Pioneer of Parallel Processing

GPUs were originally designed to accelerate graphics rendering by handling multiple operations in parallel. Over time, their parallel processing capabilities made them suitable for training and inference of AI models. Here are some key attributes of GPUs:

- High Parallelism: GPUs contain thousands of cores designed for parallel operations, making them excellent for tasks that can be broken down into smaller, concurrent tasks.

- Flexibility: GPUs are highly programmable and can be adapted to various types of computations beyond graphics, including AI workloads.

- Mature Ecosystem: With a long history in both gaming and AI, GPUs benefit from a mature ecosystem, including extensive software libraries and frameworks such as CUDA and TensorFlow.

However, GPUs also have some limitations:

- Power Consumption: GPUs, especially those designed for high-performance computing, can consume significant power, which is a critical factor for edge devices.

- Size and Cost: High-performance GPUs can be bulky and expensive, which might not be ideal for compact edge applications.

NPUs: Purpose-Built for AI

NPUs are specialized hardware accelerators designed specifically to accelerate neural network computations. Unlike GPUs, which were repurposed for AI, NPUs are built from the ground up for this purpose. Key features of NPUs include:

- Optimized Architecture: NPUs are designed to handle the specific data flow and computational patterns of neural networks, resulting in more efficient processing.

- Low Power Consumption: NPUs typically consume less power than GPUs, making them suitable for battery-operated edge devices.

- Compact and Cost-Effective: NPUs are often smaller and cheaper to produce than high-end GPUs, facilitating integration into various edge devices.

Nevertheless, NPUs have their own challenges:

- Specialization: While NPUs excel at AI tasks, they might not be as flexible as GPUs for other types of parallel computations.

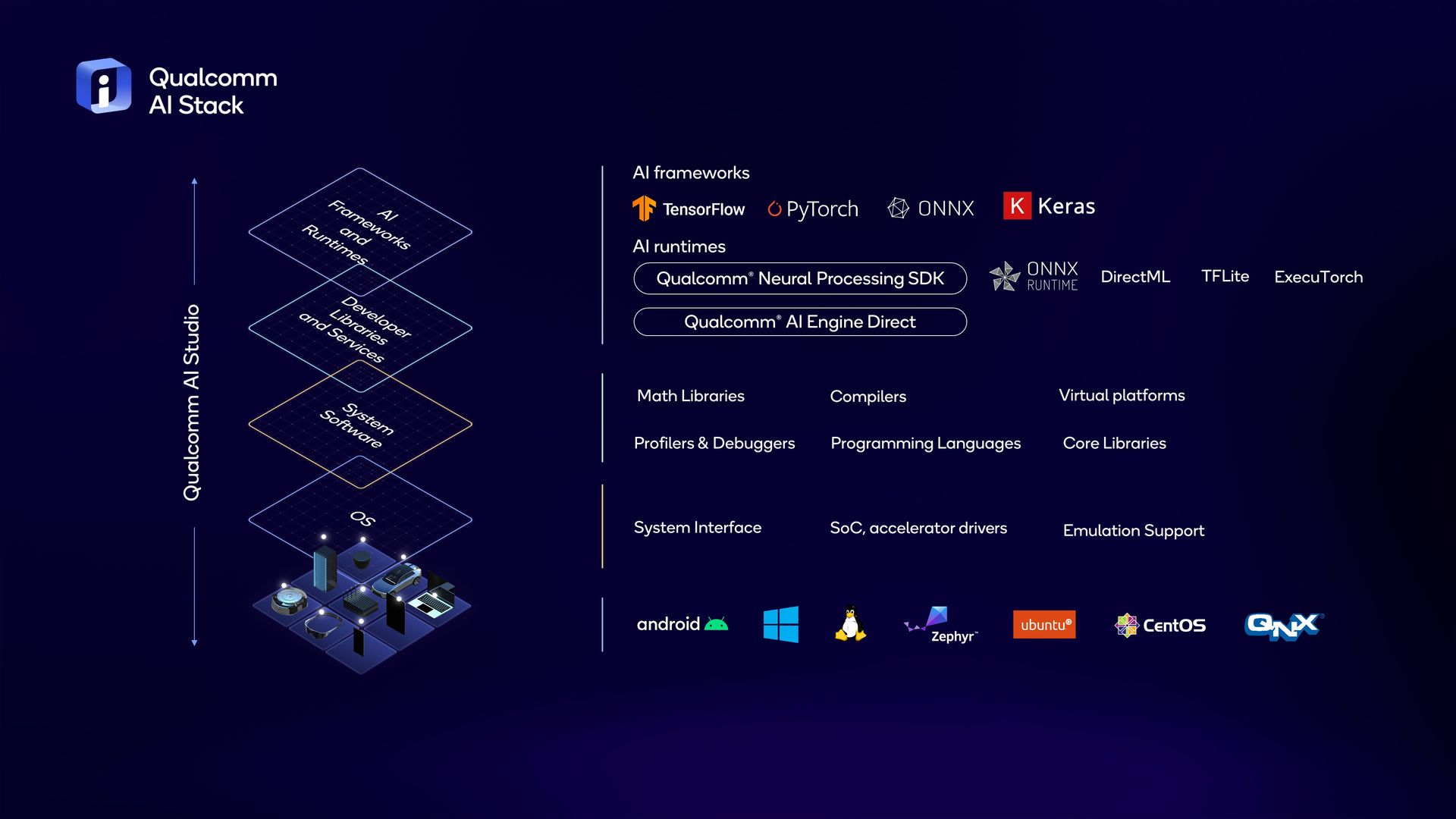

- Ecosystem Maturity: The NPU ecosystem is still growing, and although support for software frameworks is improving, it is not as comprehensive as that for GPUs.

Performance Comparison: NPUs vs. GPUs

To understand the performance differences between NPUs and GPUs for Edge AI, we can evaluate them across several dimensions:

- Latency:

- NPU: Lower latency due to specialized architecture and optimized data pathways for neural network inference.

- GPU: Higher latency compared to NPUs, but still capable of real-time processing with optimized implementations.

- Energy Efficiency:

- NPU: More energy-efficient, crucial for battery-operated edge devices.

- GPU: Higher power consumption, which can be a limiting factor for some edge applications.

- Throughput:

- NPU: High throughput for specific AI workloads, particularly in inference.

- GPU: High throughput for a wide range of tasks, with significant advantages in training larger models.

- Scalability:

- NPU: Scalable in terms of integrating multiple NPUs in a single device, but each NPU is optimized for specific tasks.

- GPU: Highly scalable across different tasks and workloads, from edge to data center applications.

- Cost:

- NPU: Typically lower cost, suitable for mass production in consumer and industrial devices.

- GPU: Higher cost, justified by their versatility and high performance.

Practical Considerations

When choosing between NPUs and GPUs for Edge AI, several practical considerations come into play:

- Application Requirements: If the primary task is running neural network inference with stringent power and latency constraints, NPUs might be the better choice. For applications requiring versatility and higher computational power, GPUs are more suitable.

- Development Ecosystem: Consider the availability of development tools, libraries, and support for each type of processor. GPUs currently have an edge here, but NPU support is growing.

- Deployment Environment: For constrained environments where size, power consumption, and cost are critical, NPUs often have the upper hand. In environments where performance and flexibility are prioritized, GPUs shine.

Conclusion

Both NPUs and GPUs have unique advantages that make them suitable for different Edge AI applications. NPUs offer specialized, efficient processing for AI tasks with lower power consumption, while GPUs provide high flexibility and computational power. The choice between the two depends on specific application requirements, constraints, and the overall deployment ecosystem.

As Edge AI continues to evolve, so will the capabilities and applications of NPUs and GPUs, potentially blurring the lines between their current roles and leading to even more integrated and efficient AI solutions at the edge.